AI adoption has become one of the most transformative forces in modern business environments, reshaping industries from healthcare to finance and retail. Organizations are investing heavily in artificial intelligence tools, expecting faster decision-making, automation, and competitive advantage. However, despite massive investment, a significant portion of initiatives fail to deliver measurable results, revealing deeper structural issues beyond technology itself.

The reality is that many enterprises struggle not because AI tools are ineffective, but because leadership frameworks, accountability structures, and strategic oversight are missing. This creates a gap between innovation and execution. In many cases, ai transformation is a problem of governance, where success depends more on management systems than algorithms or data science capabilities.

Understanding this challenge is critical in 2026, as AI systems become more autonomous and deeply integrated into business operations. Without strong governance, organizations risk ethical failures, compliance violations, and wasted investments. The issue is no longer about whether AI works, but whether organizations are prepared to manage it responsibly and strategically.

Understanding Modern AI Transformation in Organizations

ai transformation is a problem of governance refers to the process of embedding artificial intelligence into core business functions to improve efficiency, decision-making, and innovation. It is not just about using AI tools but about reshaping workflows, business models, and organizational structures to align with data-driven intelligence systems.

In today’s competitive environment, companies are rapidly integrating machine learning, predictive analytics, and generative AI solutions. However, many organizations confuse experimentation with transformation. They deploy pilot projects without a long-term roadmap, leading to fragmented systems that fail to scale effectively across departments and operations.

The expectation is often that AI will automatically solve business problems, but the reality is more complex. Without alignment between strategy, data infrastructure, and leadership direction, AI becomes a collection of disconnected tools rather than a unified transformation strategy. This disconnect reinforces why ai transformation is a problem of governance rather than technology alone.

The Real Meaning of AI Governance in the Digital Era

AI governance refers to the frameworks, policies, and accountability systems that guide how artificial intelligence is developed, deployed, and monitored within an organization. It ensures that AI systems are ethical, transparent, compliant, and aligned with business objectives.

A strong governance structure defines who is responsible for AI decisions, how risks are managed, and what standards must be followed. Without this clarity, organizations often experience confusion between technical teams, executives, and compliance departments, resulting in delayed or failed implementations.

In many organizations, governance is treated as an afterthought rather than a foundation. This leads to uncontrolled experimentation, inconsistent data usage, and lack of oversight. As a result, ai transformation is a problem of governance because success depends on how well organizations manage structure, accountability, and strategic control.

Why AI Transformation Fails at a High Rate

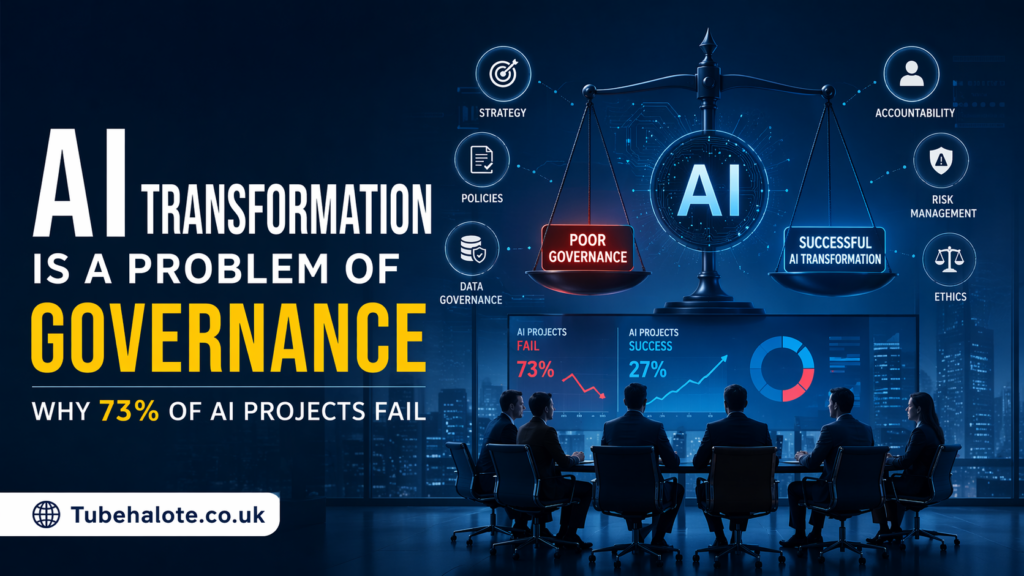

One of the most concerning trends in modern technology adoption is the high failure rate of AI projects. Studies show that nearly 73 percent of AI initiatives fail to reach production or generate expected ROI. This failure is rarely due to algorithmic limitations but rather organizational weaknesses.

Many projects remain stuck in pilot phases because companies lack structured deployment processes. Teams experiment with models but fail to integrate them into real business operations. This creates a cycle of endless testing without actual value creation or measurable impact on performance.

Another major issue is weak risk management. AI systems often introduce ethical concerns, data privacy risks, and compliance challenges. Without governance, these risks escalate quickly, forcing organizations to pause or abandon projects entirely. This reinforces the idea that ai transformation is a problem of governance at its core.

The Leadership Gap in AI-Driven Organizations

Leadership plays a critical role in determining whether AI transformation succeeds or fails. In many organizations, executives delegate AI initiatives entirely to technical teams without providing strategic direction or oversight. This lack of involvement creates misalignment between business goals and technological execution.

Effective AI leadership requires understanding not just the potential of technology but also its limitations and risks. Leaders must define clear objectives, allocate responsibility, and ensure that AI initiatives are aligned with long-term business strategy. Without this guidance, projects often drift without purpose.

Cross-functional collaboration is also essential. AI transformation cannot be owned by a single department. It requires coordination between IT, legal, compliance, and business units. Without leadership-driven governance, ai transformation is a problem of governance that continues to repeat across industries.

Key Pillars of Effective AI Governance

A strong AI governance framework is built on several essential pillars that ensure responsible and scalable implementation. The first pillar is ethical AI usage, which focuses on fairness, transparency, and bias reduction in machine learning models. Organizations must ensure that AI decisions do not create unintended harm or discrimination.

The second pillar is risk and compliance management. AI systems must adhere to regulatory standards, especially in regions like the United States and Europe where data protection laws are strict. Continuous monitoring is required to ensure compliance with evolving legal frameworks and industry standards.

Data stewardship is another critical pillar. High-quality, well-managed data is the foundation of effective AI systems. Poor data governance leads to inaccurate predictions and unreliable outcomes, which ultimately reduce trust in AI solutions across the organization.

Finally, operational accountability ensures that every AI system has clearly defined ownership. This prevents confusion and ensures that decisions can be traced back to responsible teams or individuals. Together, these pillars demonstrate why ai transformation is a problem of governance when they are missing or poorly implemented.

Breaking Down the Pilot to Production Problem

One of the most common failure points in AI adoption is the transition from pilot projects to full-scale production. Many organizations successfully develop prototypes but struggle to integrate them into real business environments where they can deliver measurable value.

This gap often occurs because pilot projects are designed in controlled environments that do not reflect real-world complexity. When organizations attempt to scale these models, they encounter issues such as data inconsistency, system incompatibility, and lack of operational support.

Without strong governance, there is no structured pathway to move from experimentation to execution. This leads to wasted resources and abandoned initiatives. In this context, ai transformation is a problem of governance because scaling AI requires structured oversight and long-term planning.

Data Governance and Its Critical Role in AI Success

Data is the foundation of all AI systems, and poor data governance is one of the leading causes of failure. Many organizations operate with fragmented data systems where information is stored in silos across departments, making it difficult to build reliable AI models.

Inconsistent data quality leads to inaccurate predictions and unreliable outputs. When organizations fail to establish clear data ownership and management standards, AI systems become unstable and unpredictable. This reduces trust in the technology and limits adoption across the organization.

Strong data governance ensures that data is accurate, accessible, secure, and properly managed. It also ensures compliance with privacy regulations and internal policies. Without it, ai transformation is a problem of governance because even advanced AI models cannot function effectively without reliable data.

Risk Management and Ethical Considerations in AI Systems

AI introduces a wide range of risks, including ethical concerns, security vulnerabilities, and reputational damage. Without proper governance, these risks can escalate quickly and create serious consequences for organizations.

Ethical risks include bias in decision-making algorithms, lack of transparency, and unintended discrimination. These issues can damage trust among customers and stakeholders if not properly addressed through governance frameworks.

Operational risks include system failures, incorrect predictions, and cybersecurity threats. Organizations must continuously monitor AI systems to ensure they operate within acceptable boundaries. This makes it clear that ai transformation is a problem of governance because unmanaged risk can undermine entire initiatives.

Building a Governance First AI Culture

Creating a successful AI strategy requires more than tools and technology; it requires a cultural shift within the organization. A governance-first culture ensures that every AI initiative is evaluated through the lens of responsibility, accountability, and long-term impact.

This cultural shift begins with leadership commitment. Executives must prioritize governance as a core business function rather than a compliance requirement. This sets the tone for how AI is developed and deployed across the organization.

Training and awareness are also essential. Employees at all levels must understand the importance of responsible AI usage and the risks associated with poor governance. Without this cultural foundation, ai transformation is a problem of governance that cannot be solved through technology alone.

Moving from Experimentation to Scalable AI Execution

To achieve successful AI transformation, organizations must move beyond experimentation and focus on scalable execution. This requires structured processes that guide AI projects from ideation to full deployment.

Standardization is key to this process. Organizations must develop repeatable frameworks for model development, testing, validation, and deployment. This reduces uncertainty and improves efficiency across multiple projects.

Performance measurement is also essential. Organizations must define clear success metrics that align with business outcomes. Without measurable impact, AI projects risk becoming experimental exercises rather than strategic assets. This is why ai transformation is a problem of governance in many enterprises.

The Future of AI Governance in 2026 and Beyond

As AI technology continues to evolve, governance frameworks will become even more important. Emerging regulations in the United States and globally are pushing organizations toward greater transparency and accountability in AI usage.

Future AI systems will likely become more autonomous, making governance even more complex and critical. Organizations will need advanced monitoring systems to ensure ethical and safe operation of intelligent systems.

In the long term, companies with strong governance frameworks will have a competitive advantage. They will be able to scale AI faster, reduce risk, and build greater trust with customers and stakeholders. This reinforces the idea that ai transformation is a problem of governance that also presents an opportunity for leadership.

Conclusion: Governance as the Foundation of AI Success

The success or failure of artificial intelligence initiatives is not determined solely by technology but by governance structures that guide their implementation. Without clear accountability, risk management, and strategic alignment, even the most advanced AI systems fail to deliver value.

Organizations must recognize that ai transformation is a problem of governance and not just a technical challenge. By prioritizing leadership involvement, data quality, ethical standards, and structured execution, businesses can turn AI into a powerful driver of growth and innovation.

FAQs

What does AI transformation is a problem of governance mean

It means that AI success depends more on leadership, accountability, and management systems than on technology itself. Poor governance leads to failed AI initiatives even when tools are advanced.

Why do most AI projects fail

Most AI projects fail due to lack of clear ownership, weak data governance, poor strategy alignment, and inability to scale from pilot to production environments.

What is AI governance

AI governance is a framework of rules, policies, and responsibilities that ensure AI systems are ethical, compliant, and aligned with business objectives.

How can organizations improve AI governance

Organizations can improve governance by defining clear ownership, improving data quality, establishing ethical guidelines, and aligning AI strategy with business goals.

Why is leadership important in AI transformation

Leadership ensures strategic direction, accountability, and coordination across departments, which is essential for successful AI implementation.

What are the biggest risks of poor AI governance

The biggest risks include biased decisions, compliance violations, security issues, financial loss, and reputational damage.

Is AI governance regulated in the USA

Yes, AI governance is increasingly influenced by data privacy laws, ethical AI guidelines, and emerging regulatory frameworks in the United States.